Documentation Index

Fetch the complete documentation index at: https://docs.bondata.ai/llms.txt

Use this file to discover all available pages before exploring further.

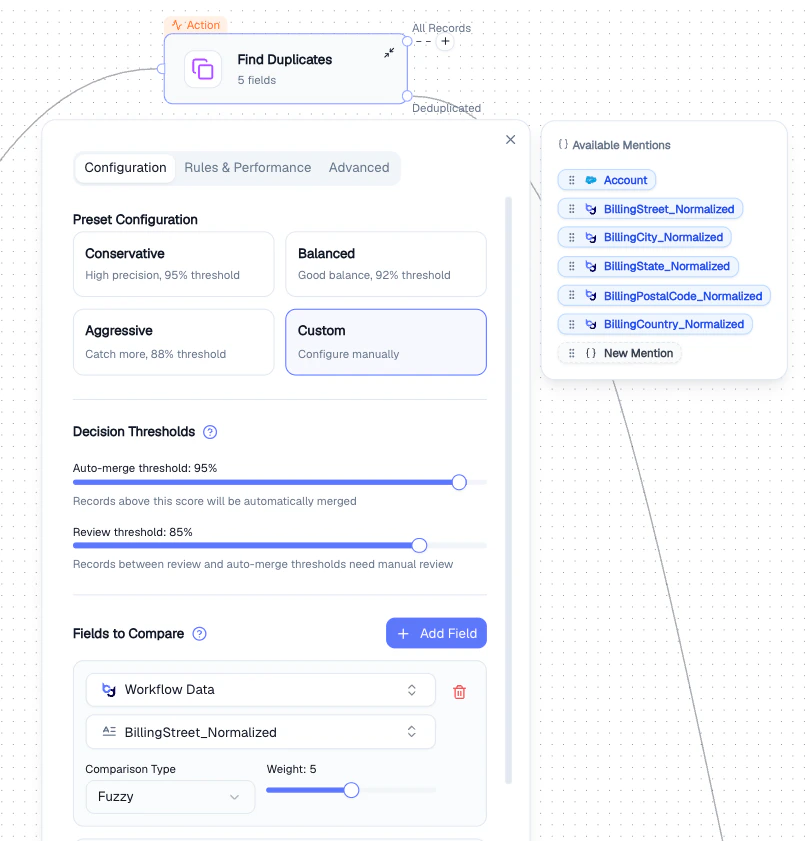

Detects duplicate records using fuzzy matching and configurable thresholds. Outputs two paths: All Records and Deduplicated - so you can handle duplicates and clean records differently.

Configuration tab

| Setting | Description |

|---|

| Preset Configuration | Choose a starting point - Conservative (95%), Balanced (92%), Aggressive (88%), or Custom |

| Auto-merge threshold | Records scoring above this are merged automatically |

| Review threshold | Records between review and auto-merge thresholds need manual review |

| Fields to Compare | Select fields and set comparison type (Fuzzy or Exact) and weight |

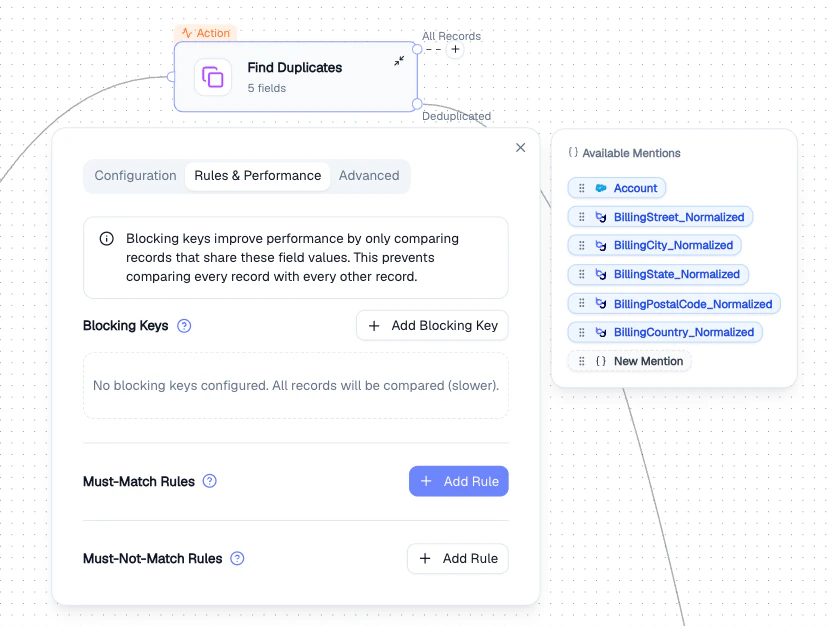

| Setting | Description |

|---|

| Blocking Keys | Only compare records that share a blocking key value - dramatically improves performance on large datasets |

| Must-Match Rules | Records must match on these fields to be considered duplicates |

| Must-Not-Match Rules | Records matching on these fields are never considered duplicates |

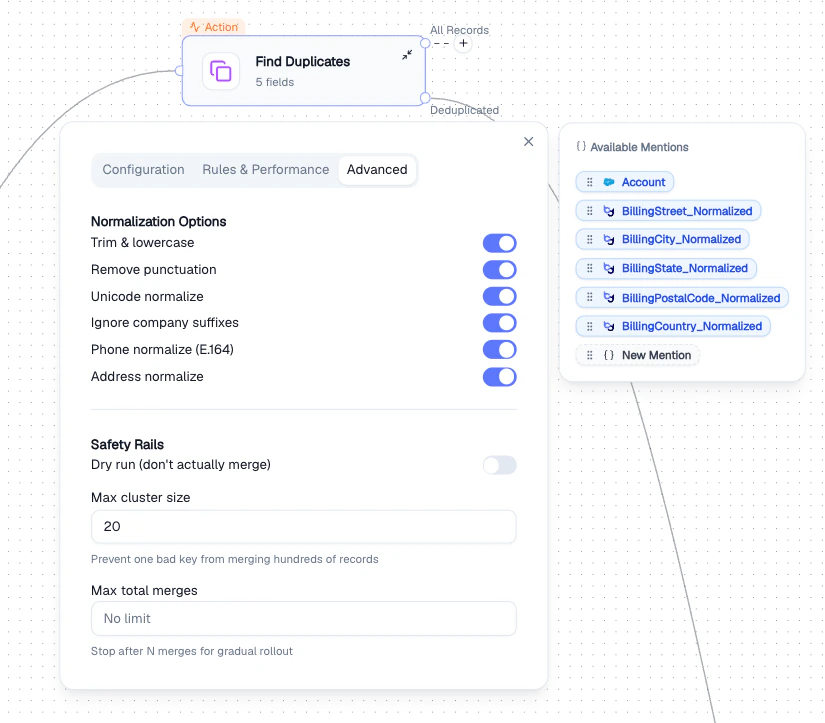

Advanced tab

Normalization Options - applied before comparison:

- Trim & lowercase

- Remove punctuation

- Unicode normalize

- Ignore company suffixes (e.g., “Inc”, “LLC”)

- Phone normalize (E.164)

- Address normalize

Safety Rails - prevent unintended mass merges:

| Setting | Description |

|---|

| Dry run | Simulate without actually merging |

| Max cluster size | Prevents one bad key from merging hundreds of records |

| Max total merges | Stops after N merges for gradual rollout |

Output

Two output paths:

- All Records - every record with duplicate scores attached

- Deduplicated - clean dataset with duplicates removed

Best Practices

- Start with the Balanced preset and adjust thresholds based on results

- Use Blocking Keys for large datasets - comparing every record pair is expensive

- Enable Dry run first to preview results before committing merges

- Set Max total merges for gradual rollout on critical data

- Bond Node - matches records across different entities, not within the same dataset

- Data Normalization - clean field values before duplicate detection for better accuracy